Advanced RAG Patterns for Production (2026)

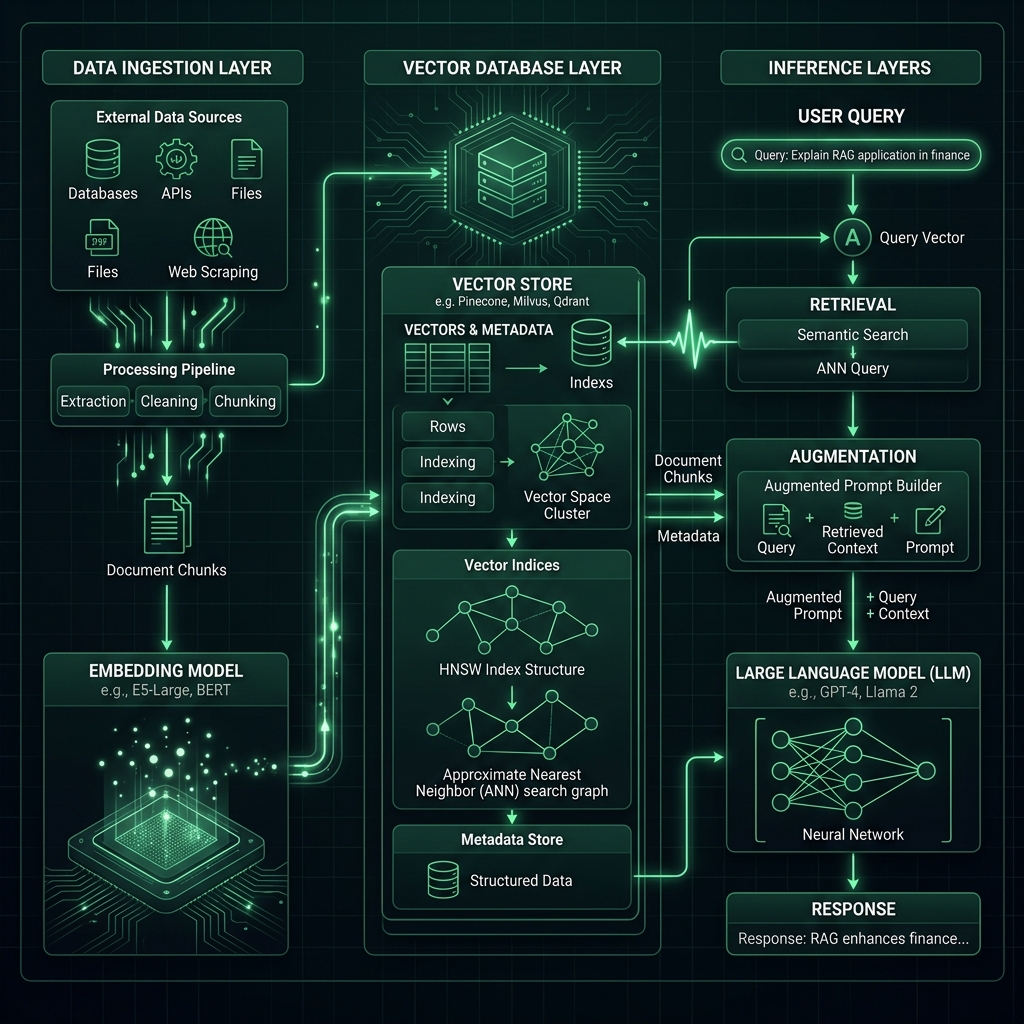

Retrieval-Augmented Generation (RAG) has evolved from simple vector lookups into complex multi-stage architectural pipelines. This course explores the high-fidelity patterns required to build systems that are not just "smart," but industrially reliable.

1. The Retrieval Bottleneck

Standard RAG systems often suffer from "semantic noise." When a user asks a nuanced question, a simple vector search might return chunks that are mathematically similar but contextually irrelevant.

Hybrid Search Orchestration

To solve this, we implement Hybrid Search. This combines:

- Dense Vectors: Catching the "vibe" and semantic intent using models like Gemini.

- Sparse Keywords (BM25): Catching specific technical terms, part numbers, or unique identifiers that vector models might overlook.

2. Corrective RAG (CRAG)

In a production environment, you cannot trust the retriever blindly. Corrective RAG adds a self-evaluation layer. If the retrieved documents have a low confidence score, the system triggers a fallback—either a broader search or a web-search augmentation.

3. High-Fidelity Re-Ranking

Initial retrieval might get you the top 20 documents, but the most important one might be at position 7. Large Language Models (LLMs) have a "lost in the middle" problem where they ignore context in the center of a long prompt. Re-rankers (Cross-Encoders) are used to re-evaluate those 20 documents and move the absolute best matches to the top 3 positions.

4. Query Decomposition & Sub-Queries

Complex architectural questions often require data from different sources.

- Sub-Query Decomposition: Breaking "Compare Anumati and Drishti" into two separate searches.

- Recursive Retrieval: Finding a document, then searching within its specific sub-sections for more detail.

5. Specialist's Insight: Unit Economics

Every RAG step adds latency and cost. A production architect must balance Precision vs. OpEx. Using a 3072-dimension vector provides the highest accuracy but increases database storage and compute requirements. Always benchmark your retrieval recall against your business requirements.